Age of Understanding Series

It’s a question that feels almost absurd when you first ask it.

We went to the Moon in 1969.

We walked on its surface.

We returned multiple times.

And then… we stopped.

More than fifty years later, we’re only now preparing to go back.

So what happened?

Did we lose the technology?

Did we lose the will?

Or did something else change?

We Didn’t Stop Because We Couldn’t

The common assumption is that returning to the Moon should be easy.

After all, we’ve already done it.

But the truth is more complex—and more revealing.

The Apollo Program was never just about exploration.

It was about urgency.

It was about proving something—during the Space Race—on a global stage.

And when that goal was achieved, something quietly shifted.

The urgency disappeared.

When the Reason Fades, So Does the Momentum

The Apollo missions were fueled by an extraordinary level of investment—financial, political, and cultural.

But once the objective was met, the question became:

Why continue?

The answer, at the time, wasn’t compelling enough.

Funding shifted. Priorities changed.

Attention moved toward:

Low Earth orbit

The International Space Station

Robotic exploration

The Moon, for a time, became something we had already done.

We Didn’t Just Pause—We Moved On

Exploration didn’t stop.

It expanded.

We sent rovers to Mars.

We built telescopes that could see back in time.

We began to understand our own planet from space in ways we never had before.

The Moon wasn’t abandoned.

It was… deprioritized.

And Then, Something Subtle Happened

When we decided to return, we didn’t decide to repeat the past.

We changed the question.

Apollo asked:

Can we get there?

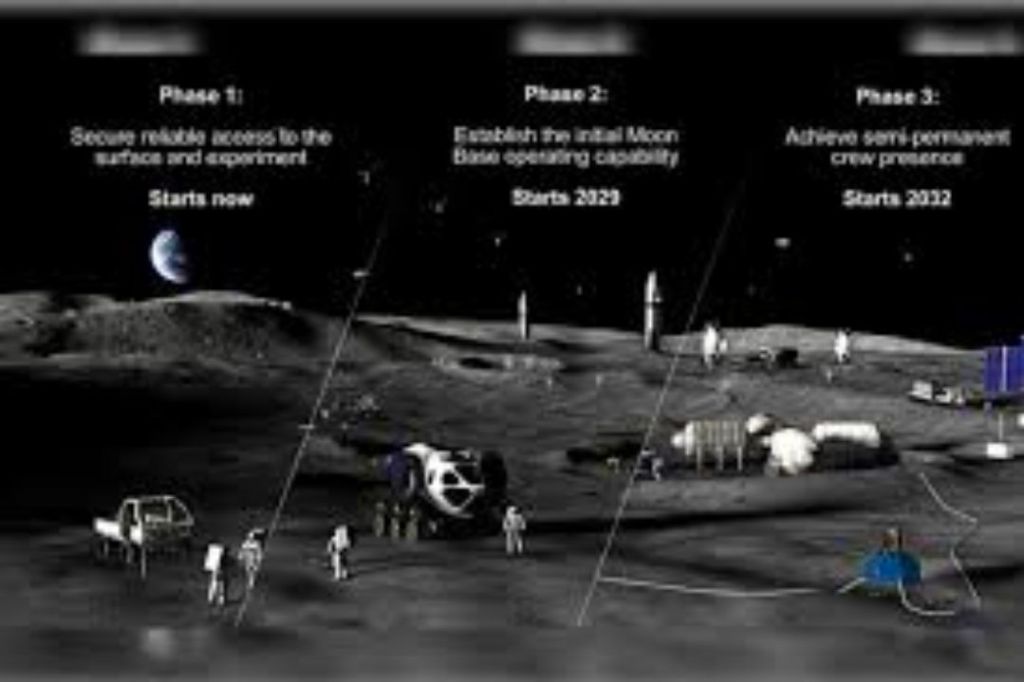

Today, through the Artemis program, we are asking something far more difficult:

Can we stay?

This Is Where Things Get Complicated

Going to the Moon once is a technical achievement.

Building a sustained presence there is something else entirely.

It requires:

New systems

New infrastructure

New ways of thinking about risk, safety, and sustainability

Even the tools we once used no longer exist in their original form.

The Saturn V is gone.

The supply chains are gone.

Much of what we are doing now is not continuation.

It is reconstruction—at a higher standard.

Why the Moon—Again?

If the goal is long-term exploration, why not go straight to Mars?

Why return to a place we’ve already been?

Because distance changes everything.

The Moon is three days away.

Mars is months.

On the Moon, we can:

Test systems

Adapt quickly

Learn from failure

On Mars, we cannot.

And so the Moon becomes something unexpected:

Not the destination.

But the proving ground.

The Shift from Exploration to Infrastructure

This is where the story changes.

We are no longer planning missions.

We are planning systems.

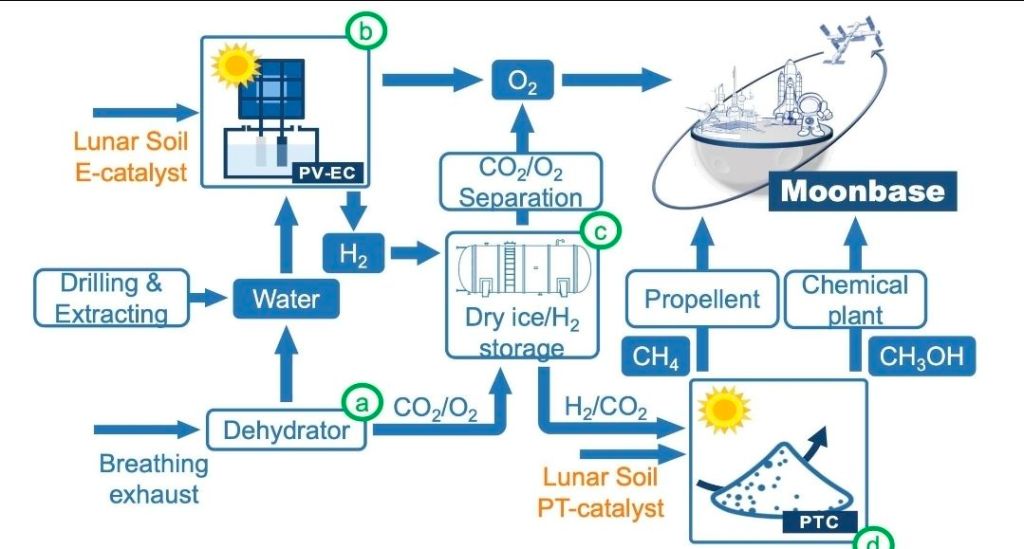

At the Moon’s south pole, there is water ice—locked in shadow, preserved over time.

That water can be transformed.

Not just into something we use…

But into something we build with.

Fuel.

Air.

Sustainability.

The Moon begins to look less like a place we visit…

and more like a place we use to go further.

The Gateway We Didn’t Expect

If we can produce fuel on the Moon, something fundamental changes.

Space travel no longer begins and ends on Earth.

It becomes layered.

Connected.

Possible in ways it never was before.

The Moon becomes:

A refueling point

A testing ground

A foundation

And in that role, it quietly becomes more important than Mars—at least for now.

The Deeper Question

This is where the surface-level question reveals something more.

“Why has it taken so long to go back to the Moon?”

Because we are no longer trying to go back.

We are trying to go forward—differently.

More deliberately.

More sustainably.

With a deeper understanding of what it actually means to leave Earth.

A Final Thought

We rushed to the Moon once—because we needed to prove that we could.

We are returning now—because we need to understand what comes next.

Not just how to arrive.

But how to remain.

And perhaps that is the real shift of our time:

From capability…

to responsibility.